Hey y’all –

Here’s a little story about tackling a grueling estimation process. It highlights some handy tactics for troubleshooting workflow issues, and I share some of my big takeaways from this growth journey.

Before I launch into it, I want to say I sincerely appreciate the group I talk about in this post. This episode was from the beginning of our six years (wow!) together. We ended up creating an elite product development group (also together). I have tremendous respect for every single member of that team. IDK, I felt the need to add this disclaimer before diving head-first into conflict and critique. There’s no growth in the comfort zone!

Also–next week is a holiday in the US, so we’re taking a little break from posting. See you in two weeks!

/ Dave

Estimation is a polarizing topic in software engineering. On one hand, stakeholders want an idea of what will be delivered and when. On the other hand, estimation can be perceived as micro-management, waste, and trigger gamophobia (fear of commitment), particularly amongst the engineering ranks. In my experience, estimation usually isn’t the problem. It’s a symptom.

Today, I’d like to share a true story that illustrates this. I’ll wrap up with some arguments for and against estimates.

Hell is a grinding estimation session

In 2008, I took a job as the internal product development coach for VersionOne, a maker of an agile planning tracking tool (they’re now called Digital.ai).

One of the things I first noticed was that their estimation process seemed unnecessarily grueling. Refinement sessions involved lots of design, and there appeared to be a high emphasis on estimation accuracy. An hour-long session would produce a couple of estimated stories.

Doing some back-of-the-envelope math:

Ten people in a refinement session

Let’s assume the average hourly wage for each person was ~$100 (probably more, but good enough for a rough calculation)

1-hour session

An average of 2 stories gets estimated each session

Each estimate costs the company $500?!

The rent is too damn high!

Considering the typical backlog for a quarterly release would consist of around 100 stories, that’s an expense of $50,000. As a smaller SaaS firm, that price was way too steep. We didn’t have big, dumb enterprise money to throw around. The cost didn’t justify the value of knowing how much time and effort each work item might take to complete.

Skill issues

Of course, this team used story points.

These fictional units of measure, centered on complexity, not time, invite debates about how complicated something is. Senior folks might recognize something as easy, whereas people new to the industry or codebase might call something complex.

More than anything, these debates shed light on the skills divide within the group.

The old timers had built an exceedingly clever system, with its arcane secrets held in their heads. Though it was .NET-based, it featured an XML-based type system, and that’s just the tip of the bikeshedding iceberg (to mix metaphors with free abandon). C#, apparently, didn’t do a good enough job and needed some help.

The rest of us? Objectively senior in our careers, we experienced much difficulty working with this exotica in all its undocumented glory.

Here’s another lesson worth learning – remember when I said problems with estimation usually have a root cause somewhere else? In this case, there was an unlevel understanding of the system.

My suggestion at this time was to stop doing estimates altogether.

I mean… we’re a product company! Just work on the most important aspect of your product strategy!! Design quarterly releases to schedule and negotiate what features make it in before the drop date!!!

By the way, we had continuous deployment so we could keep shipping after a marketing release.

Logic and rhetoric vs. entrenched belief

This team took great pride in how “agile” their process was. Unfortunately, this meant following the dogma of thought lords rather than achieving the property of literal agility.

My hasty proto-#NoEstimates suggestion was met with resistance and skepticism.

I countered with more rhetoric and appeals to logic. They dug their heels in, both product managers and developers emphasizing the importance of estimation. This friction seemed unusual and nonsensical for the latter group. I mean–what developer rejoices in estimation?

The PMs needed predictability. They needed to know what would make the release so they could coordinate with partner groups such as sales and marketing.

Developers wanted the chance to jam on design and stay aware of what was happening on the collectively shared codebase, especially since the company was growing pretty rapidly.

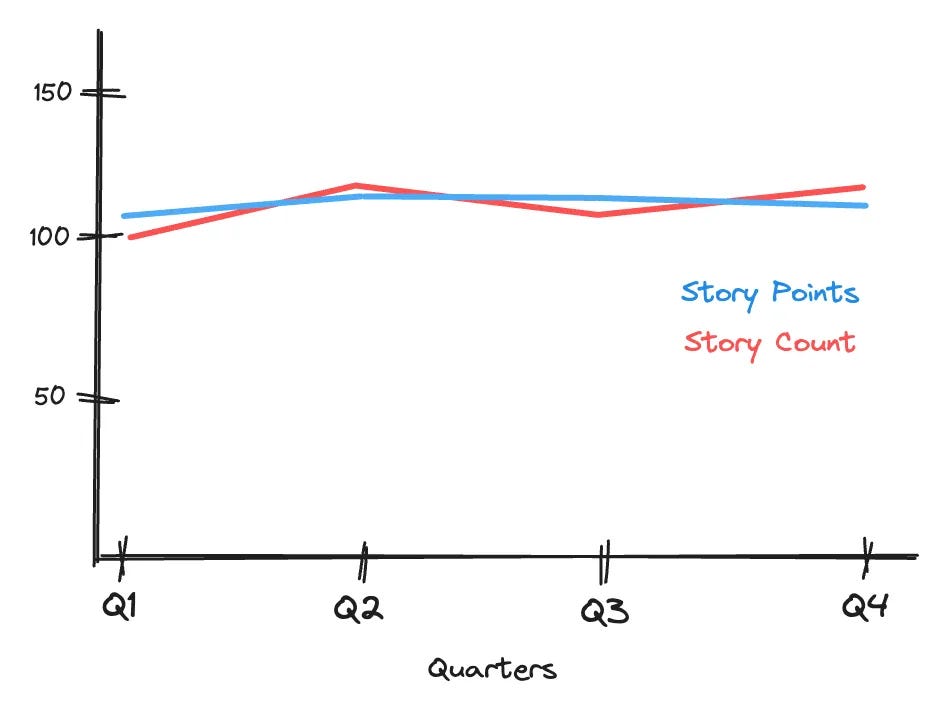

I wanted to reduce waste in the process. I didn’t make much headway until I remembered our product had an API. With this API, I could download historical data of stories completed over the last year. So I did that, and after an hour or two, I produced this graph:

You’re seeing the total points delivered in a quarter plotted along with the total count of stories delivered in a quarter. And, yes, this is the actual data.

There’s a strong correlation here—something like r=0.956 (at least, that’s what ChatGPT tells me from the image). That’s a strong, direct correlation. Strong enough that we might as well use the sum of stories as yesterday’s weather and estimate every story as one.

Providing this data seemed to resonate with the group. Seeing this, they agreed to experiment with something else.

This goes to show you that a little data can add outsized weight to your intent. A graph, chart, or visualization beats words alone all day long.

Moving to time-based sizing

The group agreed that most of our stories could be accepted and given a point value of one—one story, one point. This stat will sound made up (because it is, but it is also close), but that was about 80% of our stories.

Pulling more data, we found that our average story took 3-5 days to complete. This factoid led us to thinking more about time than complexity. Another story for another day, but we had started to shift our thinking to cycle time.

Eventually, with more data and process control charts and coaching, we arrived at the following scheme:

0 = Negligible work that wasn’t worth tracking. Think localization changes or pixel pushing.

0.5 = Under a day of work. A pair of developers might complete one to a few of these daily.

1.0 = 3-5 days, as mentioned earlier.

2.0 = 5-10 days with a special power. Developers would take a day and circle back with their PM to discuss realism, possible splits to smaller stories, etc.

Within a month, estimation sessions produced dozens of estimated work items in an hour. Estimation had become what it was always intended to be—sizing work to understand what would make a release.

Estimation was now a practice that helped PMs communicate details about a release with their partners in other departments.

Developers found other, more appropriate venues for jamming on design and closing the skill gaps.

Lessons learned the hard way

It’s worth extracting some principles encountered in this journey. So let’s do that:

Visualized data is often the best way to make your case, especially a controversial one.

Everyone understands time. Story points are esoteric, highly relative, and subject to individual interpretation. Time isn’t immune from this, but it exposes things like skill gaps much quicker.

Estimation is only worth it if you need predictability, which sometimes you do.

The site of pain is rarely the source.

In this story, more profound issues (developer productivity and technical debt) needed solving. Estimation, as it was, silently bundled these root-cause problems into the real but orthogonal need for predictability.

Separating these issues was a process, not a quick patch. It was a rough and bumpy road to get there, but we (all of us) gained some good experience points along the way.

Subsequent countermeasures certainly benefited from this newfound collective wisdom… An inclination to try new things (the team’s part) and understanding how to approach the team (my part) resulted in less drama and frustration…

Putting estimation in its proper place as a tool fit for a designated purpose helped us uncover and prioritize more pressing problems. The bigger win was that we all understood how to tackle thornier problems with more harmony and efficacy.